Sidebar

Table of Contents

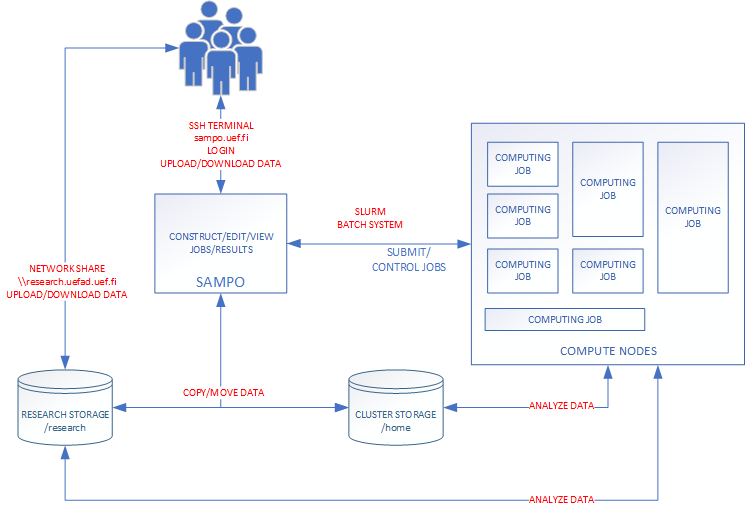

Slurm basics

Slurm Workload manager is an open source, fault-tolerant, and highly scalable cluster management and job scheduling system for large and small Linux clusters. As a cluster workload manager, Slurm has three key functions.

- It allocates exclusive and/or non-exclusive access to resources (compute nodes) to users for some duration of time so they can perform work.

- It provides a framework for starting, executing, and monitoring work (normally a parallel job) on the set of allocated nodes.

- It arbitrates contention for resources by managing a queue of pending work.

- Optional plugins can be used for accounting, advanced reservation, gang scheduling (time sharing for parallel jobs), backfill scheduling, topology optimized resource selection, resource limits by user or bank account, and sophisticated multifactor job prioritization algorithms.

Bioinformatics Center uses Slurm on kudos.uef.fi computing cluster. This guarantees that the most of the tutorials and guides found from the Internet are applicable as-is. The most obvious starting place to search for usage information is documentation section of the Slurm own website Slurm Workload Manager.

Example

In this example we will run simple MATLAB code on one computing node.

Example MATLAB code (matlab.m)

% Creates a 10x10 Magic square M = magic(10); M

Example script (submit.sbatch)

Here we have specified the batch script with few basic options. It is important to reserve the amount of RAM that you'll need and estimate the runtime of your code. Optionally you can give name for your job.

#!/bin/bash #SBATCH --ntasks 1 # Number of task #SBATCH --time 5 # Runtime in minutes. #SBATCH --mem=2000 # Reserve 2 GB RAM for the job #SBATCH --partition small # Partition to submit module load matlab/R2022b # load modules matlab -nodisplay < matlab.m # Execute the script

User can submit the job to the compute queue with the sbatch command.

sbatch submit.sbatch

sbatch command submits the job to the job queue and executes the bash script.

Here is an another example on how to analyze variants.

#!/bin/bash #SBATCH --ntasks 1 # Number of task #SBATCH --time 00:30:00 # Runtime 30min #SBATCH --mem 2000 # Reserve 2 GB RAM for the job #SBATCH --partition small # Partition to submit module load bcftools # load modules # filter variants and calculate stats bcftools filter --include'%QUAL>20' calls.vcf.gz | bcftools stats --output calls_filtered.stats

Submit the job to computing queue with the sbatch command.

sbatch variants.sbatch

Slurm job queue

User can monitor the state of the job with the squeue command. JOBID is provided by the sbatch commmand when the job is submitted.

squeue -j <jobid>

Output of the job is available in local output file.

less slurm-<jobid>.out

Scontrol - View or modify Slurm configuration and state.

Scontrol command gives some information about the job, queue (partition) or the compute nodes. This tool can also modify various parameters of submitted job (runtime for example).

List all compute nodes

scontrol show node

List all compute nodes

scontrol show node

List all queues/partitions

scontrol show partition

List information of the given jobid

scontrol show job <jobid>

Slurm job effiency report (seff)

Seff command will give the report of the completed job on how much resources it consumed. The reported information are CPU wall time, job runtime and memory usage.

seff <jobid>