Sidebar

Table of Contents

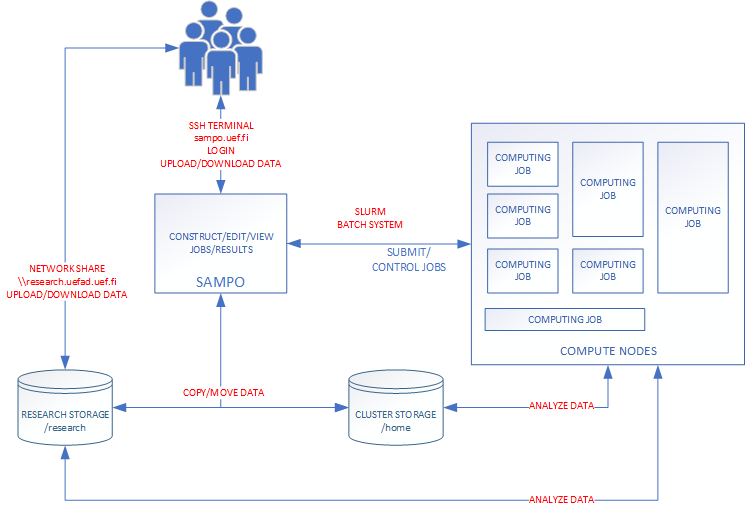

Slurm Workload Manager

Slurm Workload Manager is an open source Job scheduler that is intended to control background executed programs. These background executed programs are called Jobs. The user specifies the Job with various parameters that include run time, number of tasks, number or required CPU cores, amount of required memory (RAM) and specify which program(s) to execute. These jobs are called batch jobs. (Batch) Jobs are submitted to a common job queue (partition) that is shared by the other users and Slurm will execute the submitted jobs automatically in turn. After the job is completed (or an error occurs) Slurm can optionally notify the user with an email notification. Additionally to the batch jobs the user can reserve a compute node for interactive jobs where you wait for your turn in a queue and on your turn you are put on your reserved node where you can execute commands. After the reserved time is over your sessions is terminated.

Slurm Partitions on kudos.uef.fi

- small. 8 out of 10 nodes. Maximum run time 3 days

- longrun. 3 out of 10 nodes. Maximum run time 14 days

- gpu. 2 nodes. Maximum run time 3 days.

Explanation of the partitions

Compute nodes are grouped into multiple partitions and each partition can be considered as a job queue. Partitions can have multiple constraints and restrictions. For example access to certain partitions can be limited by the user/group or the maximum running time can restricted.

small partition is the default partition for all jobs that the user submits. The user can reserve maximum of 1 nodes for his/her job. Default run time is 5 minutes and maximum 3 days.

longrun partition is for long running jobs and only one node is for this usage. Default run time 5 minutes and maximum 14 days.

gpu partition is for gpu jobs (CUDA jobs). The user can reserve 2 nodes with 8xNVIDIA A100/40 GB. Default run time is 5 minutes and maximum 3 days.